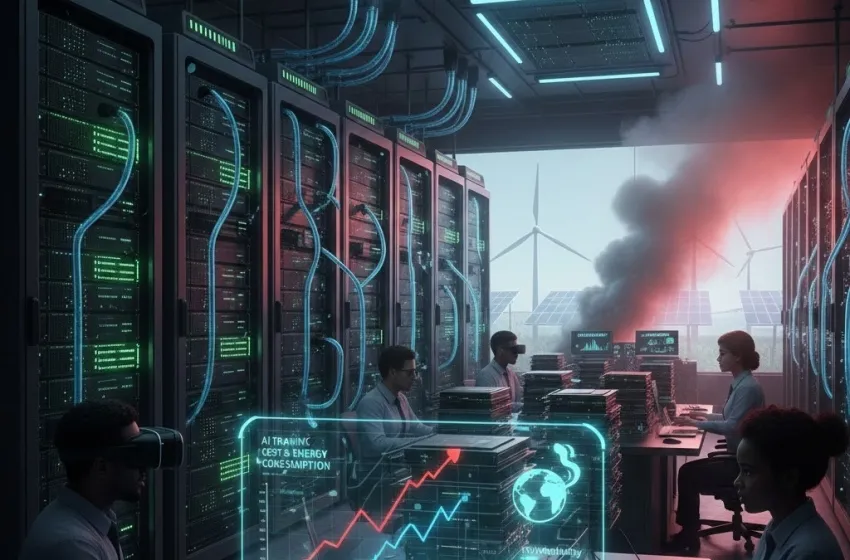

The Generative AI compute crisis stems from soaring AI training cost, GPU scarcity, and massive energy consumption, threatening AI sustainability.

The sudden and widespread adoption of generative Artificial Intelligence (AI) has sparked an innovation gold rush, but it has simultaneously ignited a deep and complex crisis concerning computational resource consumption. The staggering appetite for power and hardware required to train and run massive models—specifically large language models (LLMs) like GPT-4—is creating a perfect storm of unsustainable AI training cost, severe hardware shortages, and a massive environmental footprint. This is the Generative AI Compute Crisis, a critical challenge that threatens to slow progress and undermine efforts toward genuine AI sustainability.

The Shocking Scale of AI Compute Demand

The power of modern generative AI is directly correlated with the size of the models, which are measured in billions or even trillions of parameters (the internal weights the model learns). This scaling is the core driver of the compute crisis.

The Cost of Creation: AI Training Cost

Training a state-of-the-art LLM is an operation of unprecedented scale. The computational demands have grown exponentially, exceeding the historical pace of Moore's Law. While older, simpler models might have consumed a few megawatt-hours (MWh) of electricity, the newest frontier models demand significantly more:

- GPT-3 (175 billion parameters): Training was estimated to have consumed approximately 1,287 MWh of electricity.

- GPT-4 (estimated 1.7 trillion parameters, though exact figures are proprietary): The compute required for training is exponentially higher, with some estimates suggesting it could be over 50 GWh (50,000 MWh). This is comparable to the annual electricity consumption of thousands of average US homes.

This phenomenal consumption directly translates to a colossal AI training cost. Beyond the electricity bill, these costs include the capital expenditure (CapEx) for building and maintaining hyperscale data centers, purchasing and replacing thousands of high-end accelerators, and employing the specialist engineers required to manage these systems. The resulting price tag—potentially in the tens to hundreds of millions of dollars for a single foundational model run—makes advanced AI development a privilege reserved for only the largest, most well-funded tech giants.

The Cost of Use: Inference Demand

The crisis doesn't end with training. Every time a user interacts with a generative AI service—a process called inference—it consumes energy. A single query to an LLM like ChatGPT is estimated to consume about ten times more electricity than a standard web search. Given that models are now being integrated into search engines, writing tools, and countless other ubiquitous applications, the total inference energy demand is set to become the dominant part of AI’s energy footprint.

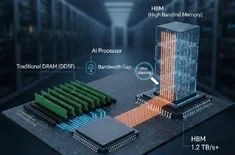

The Hardware Strain: GPU Scarcity and the Compute Bottleneck

The specific type of hardware required for deep learning is a primary point of failure in the AI supply chain.

The Reign of the GPU

Unlike traditional computing, which relies on Central Processing Units (CPUs), deep learning workloads are dominated by the massive parallel calculations needed for matrix multiplication. This task is perfectly suited for Graphics Processing Units (GPUs), which were originally designed for rendering graphics. Today, these powerful accelerators, primarily manufactured by a single company, are the backbone of the AI industry.

The Reality of GPU Scarcity

The sudden, explosive demand for generative AI has created an extreme case of GPU scarcity. The limited manufacturing capacity for these highly specialized chips, combined with geopolitical factors, supply chain limitations, and the sheer volume required to equip a single large data center (often thousands of GPUs), has led to a major bottleneck.

This scarcity creates a domino effect:

- Massive CapEx: The few available GPUs command extremely high prices, further contributing to the prohibitive AI training cost.

- Delayed Development: Startups and smaller research labs struggle to access the necessary hardware, creating a significant barrier to entry and concentrating innovation among the richest corporations.

- The Compute Bottleneck: It is not simply about compute power, but about the memory bandwidth and data transfer rates between the accelerators. As models grow, they often exceed the memory capacity of a single GPU, requiring data to be constantly shuffled across a massive cluster. This I/O challenge—often referred to as the von Neumann bottleneck in this context—means even when the raw compute power exists, the time and energy consumption spent on moving data becomes the primary compute bottleneck, slowing down training and wasting energy.

The Environmental Toll: Energy Consumption and AI Sustainability

The most alarming aspect of the compute crisis is its environmental impact. The surge in energy consumption threatens to undermine global climate goals unless decisive action is taken.

The Data Center Power Grab

The collective electricity consumption of data centers, driven in large part by generative AI, is soaring. Global data center electricity consumption is projected to more than double by 2030, with AI identified as the primary catalyst.

- Carbon Footprint: Much of this energy is still sourced from fossil fuels. Training a model like GPT-3 released an estimated 552 metric tons of carbon dioxide, equivalent to the annual emissions of over a hundred passenger cars. This figure likely pales in comparison to the footprint of training GPT-4 or its successors.

- Water Footprint: The immense heat generated by dense GPU clusters necessitates sophisticated cooling systems, often using massive amounts of freshwater for evaporative cooling. Training GPT-3 in US data centers was estimated to have consumed 5.4 million liters of water, making the environmental cost of AI not just about carbon, but also about resource depletion.

The urgency to address these impacts has made AI sustainability a central, non-negotiable objective for the technology's future. The current trajectory is environmentally unsustainable, demanding a fundamental shift in how AI is designed, trained, and deployed.

Conclusion and the Path to Sustainable AI

The Generative AI Compute Crisis presents a formidable trifecta of obstacles: exponential AI training cost, a crippling compute bottleneck due to GPU scarcity, and an alarming rise in energy consumption. Allowing this scaling trend to continue unchecked will exacerbate a technological and environmental divide, entrenching a handful of tech monopolies and making genuine AI sustainability an impossible dream.

The path forward requires innovation on multiple fronts:

- Algorithmic Efficiency (Green AI): Developing more efficient model architectures, such as Mixture of Experts (MoE) models, which activate only a fraction of their parameters per query, drastically reducing inference cost.

- Hardware Innovation: Investing in specialized, energy-efficient AI chips (ASICs) and adopting advanced cooling methods like liquid cooling to reduce data center power and water needs.

- Distributed Computing: Utilizing techniques like Edge AI and federated learning to move computation closer to the user, decentralizing the load and reducing the energy spent on long-distance data transfer.

Ultimately, the future of AI hinges on our ability to prioritize efficiency over brute force. The pursuit of ever-larger models must be tempered by a commitment to sustainability, ensuring that AI remains a tool for global progress rather than a catalyst for environmental decline.